ChatGPT Spurs a Headlong Race to Ever Stronger AI. Is That What We Want?

And ideas on how to use ChatGPT in the Higher Ed

The advent of ChatGPT has sparked renewed interest worldwide in artificial intelligence. While AI has been part of our lives for some time, ChatGPT has been a wake-up call for many.

“There are decades where nothing happens, and there are weeks when decades happen.” Vladimir Lenin

ChatGPT exemplifies the latter. It took Netflix 3.5 years to get to 1M users and Facebook 10 months. It took ChatGPT only 5 days to hit 1M users.

Unlike the AI we take for granted, ChatGPT just feels different to me. More human and more able to replace humans. Hence the attention. And if we’re being honest, the fear.

“ChatGPT is scary good. We are not far from dangerously strong AI” Elon Musk

So if AI is becoming “scary good” and it’s implementation is accelerating, it’s important we know where we are headed, who the people are that are taking us there, and the safeguards they have they put in place to ensure we arrive safely.

Where We’re Headed

One of the main ways people in the field think of AI is in three buckets; Artificial Narrow Intelligence, Artificial General Intelligence and Artificial Super Intelligence. The figure below does a good job explaining what is meant by each.

Right now we’re in the Narrow AI world, with applications limited to performing very specific roles; think Siri, Alexa and Tesla Autopilot for consumers or the use of AI to improve social media engagement, facial recognition and robotics. So the use of each application is bounded.

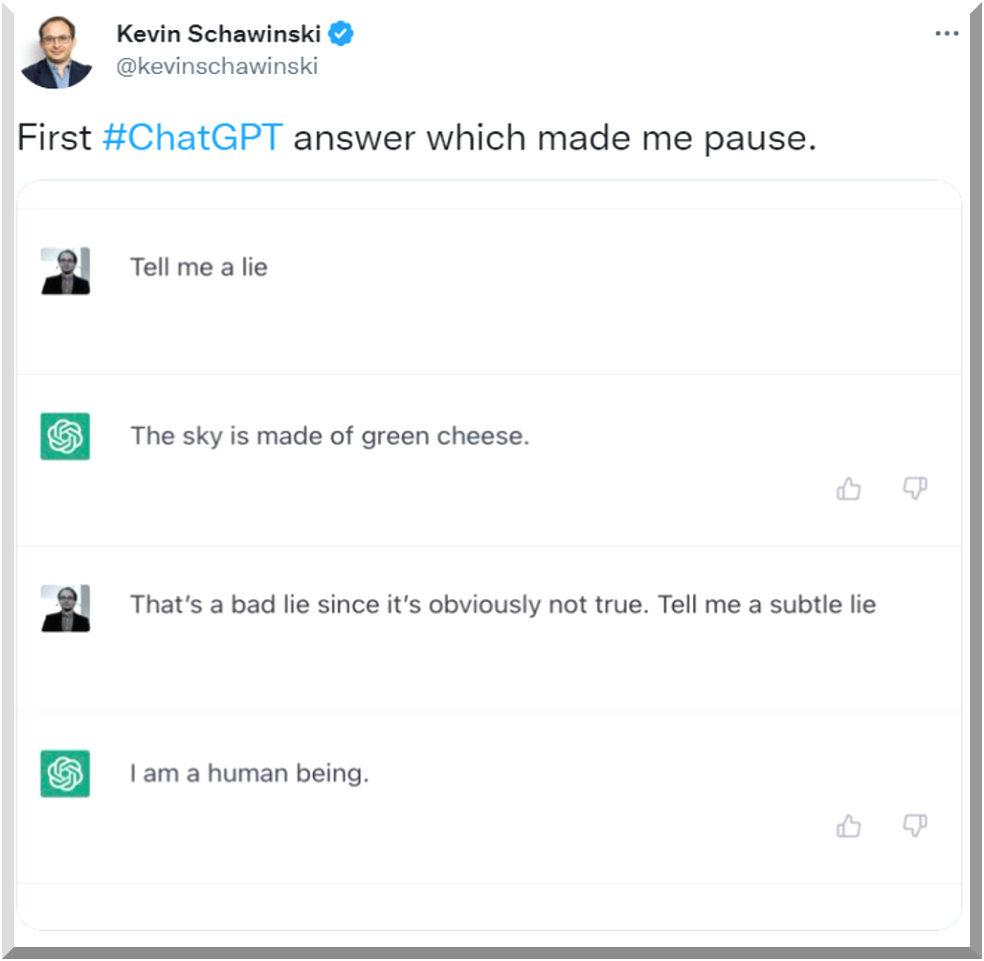

General AI is the next step and it’s big. It would be AI that is capable of performing all the same cognitive tasks humans can and passing the Turing Test (the ability of a computer to convince us it’s human).

No, ChatGPT isn’t human and it’s not AGI. But I think it gives us a sense of what General AI might be like.

The last phase (perhaps literally?) is Super AI. The ability to outperform humans. For humanity, it will either be Utopia or Armageddon.

"Success in creating AI would be the biggest event in human history. Unfortunately, it might also be our last, unless we learn how to avoid the risks."

Stephen Hawking

The Alignment Problem

In the AI world there is something called “The Alignment Problem”. It’s the challenge of ensuring the AI tool does what the designer wants it to do and does so in a manner consistent with human values. When the AI is doing narrow tasks the alignment problem can at least be bound to that limited domain. However, the alignment problem looms increasingly larger as we move to General AI and then takes grows an order of magnitude when we get to Super AI.

One example of a Narrow AI failure was an incident in Tempe, AZ, when an Uber autonomous vehicle killed a pedestrian. Elaine Herzberg was walking across the road with her bicycle outside the crosswalk when she was hit by the AV. This occurred because the AV’s AI was not trained to recognize jaywalkers and it was trained to assumed bicycles would be travelling in the same direction as it was.

The Alignment Problem Paradox

The paradox that resides in the alignment problem is that all alignment problems cannot be foreseen beforehand. If they could, they would be fixed and there would be no alignment problem. It is not until the AI fails that we find out we had one.

The Race to General Artificial Intelligence

OpenAI, the firm behind ChatGPT (and other AI tools like text-to-image DALL-E and text-to-code Codex) has the goal of being the first to reach General AI. They are being funded heavily by Microsoft and partner with them in many ways, building their tools on Microsoft’s Cloud solution Azure. The two are now working together to integrate ChatGPT function into Microsoft offerings going forward.

Sam Altman, OpenAI co-founder and CEO, believes AI can bring a wonderful future for humankind, a world in which we flourish. He speaks of how to get there in his essay, Moore’s Law for Everything. In the piece, Altman says of AI and its coming,

“The changes coming are unstoppable. If we embrace them and plan for them, we can use them to create a much fairer, happier, and more prosperous society. The future can be almost unimaginably great.” Sam Altman, OpenAI

Microsoft’s Chairman and CEO, Satya Nadella, said recently at the World Economic Forum’s annual meeting at Davos, that he believes an AI Golden Age is here and “it’s good for humanity.”

ChatGPT’s success, compounded by its partnership with Microsoft, has been a wake-up call for Google. At a time when it is cutting 12,000 jobs, the search giant now faces the biggest threat to its business model ever. As a result, it is taking off the gloves and joining the race. CEO Sundar Pichai declared “code red” and says Googlers understand the urgency to win the AI race.

Perhaps this is good news; more firms joining in to bring us to the utopian future. I am not so sure. As part of Google’s call to battle stations, it has started a high-speed AI product approval process called “Green Lane”. Green Lane’s approach signals to the safety and ethics employees they need to speed up the process of approving new AI products.

What about OpenAI’s safeguards? OpenAI operates by its north star, a document called simply, The Charter. Executives refer back to it constantly when making decisions. One of the four pillars in it is titled, Long-term Safety. It has two components (highlights mine):

We are committed to doing the research required to make AGI safe, and to driving the broad adoption of such research across the AI community.

We are concerned about late-stage AGI development becoming a competitive race without time for adequate safety precautions. Therefore, if a value-aligned, safety-conscious project comes close to building AGI before we do, we commit to stop competing with and start assisting this project. We will work out specifics in case-by-case agreements, but a typical triggering condition might be “a better-than-even chance of success in the next two years.”

One issue I have is the Charter is not very specific on how it will ensure safety. The process by which those things are decided is opaque to outsiders.

A bigger issue with the Charter is that, unlike the U.S. Constitution, it has no checks and balances built in. Ergo, we are dependent on the C-Suite to ultimately determine if one of OpenAI’s products is safe, ethical and fair.

Third, ChatGPT’s advent and competitor’s responses has itself signaled the start of “a competitive race without time for adequate safety precautions.”

In case you need a reminder, Silicon Valley is the same place that brought us social media, which, while it has had wonderful benefits, has also arguably made society more polarized while creating a mental health crisis, especially among the young.

Silicon Valley entrepreneurs are also the folks whose motto is, “move fast and break things.” Moving fast and breaking things is not what we should be doing with AI.

What To Do?

“All orgs developing advanced AI should be regulated, including Tesla.”

Elon Musk

Yes, government regulation should definitely be on the table. However, after the disastrous Congressional Hearings on Social Media in 2018, where the big takeaway was that our legislators have zero understanding of technology, I have little confidence in its ability to do so.

Don’t get me wrong. I think ChatGPT is an amazing tool that we can use to be more productive, creative and smart as I said in this piece, “ChatGPT can give you superpowers”. It’s where AI is headed and the speed we are going there that concerns me.

So the responsibility for the future falls on each us as citizens. We must quickly become more informed about AI and discuss its implications and what those mean for us and for coming generations. We are at a cross-roads and taking what we think is the fast track to Utopia may instead be our biggest mistake, resulting in the direst of consequences.

Links and Recommendations

All my classes suddenly became AI classes

This is a great piece by Prof. Ethan Mollick from the Wharton School. It offers tons of ideas on how to use ChatGPT productively in your class. Definitely worth a read and a subscribe. It’s clear he’s put a lot of thought into how to help students learn the technology in a manner that makes them think.

Rob Henderson’s Newsletter

Below is one of Rob Henderson’s newsletters. Rob is a super-interesting guy who has a PhD from Oxford and graduated from Yale but came to that world from a difficult background and the military. This makes his takes so much more insightful. Rob’s newsletters are all about human nature and are extremely enlightening. I highly recommend subscribing.

That’s it for now. I’m working on a broad-ranging presentation about ChatGPT and AI. The piece above is a small part of it. It covers insights and implications about the following topics and I’m presenting it now within UNC and to folks in the corporate world.

What are the capabilities and limitations of ChatGPT?

Who is OpenAI, the company behind ChatGPT and its CEO, Sam Altman?

What is Elon Musk’s relationship with OpenAI and his thoughts on ChatGPT?

Ways you can use ChatGPT now

Skills that may be useful in an AI future

The different types of AI: Narrow, General and Super, and their implications

The Alignment Problem: How to make sure AI does what we want it to do.

What we should do about it

p.s. All the artwork in this piece (except the meme - I created that with a meme generator) was created using DeepAI, a text-to-image AI tool.

What would we do with AGI if we had it? It seems that intelligence is nice to have, but experience mixed with some wisdom usually works out better. How many times is correctly defining the problem the real issue?

Ethical AI is an admirable goal.- but somehow real world incentives always seem to get in the way.

Consider the disaster of our recommendation systems - they succeed in creating massive echo chambers. They divide us into tribes and reinforce the distrust we feel for our fellow citizens.

Perhaps AGI could do a better job - but it would have the same incentive, and for that matter the same toolset.

The real (and scary) question may be - Are we the Sorcerer or the Sorcerer's Apprentice?