Introducing an AI robot for your home, With AI, why get a degree?, The best film made by AI...so far, 100M Token Context Window!, ChatGPT gets lazy over holidays , AI is paying AI with Crypto and more!

Introducing Neo Beta, the AI Robot for Home

Watch the video at the link.

1X Unveils NEO Beta, A Humanoid Robot for the Home

1X, a pioneer in humanoid robotics, unveils NEO Beta, a prototype of its bipedal humanoid designed for home use.

NEO Beta represents a significant advancement in robotics, moving beyond traditional, stiff robots to humanoids with bio-inspired designs that can safely work among people.

This year, we are deploying a limited number of NEO units in selected homes for research and development purposes. Doing so means we are taking another step toward achieving our mission.”

1X’s mission is to create an abundant supply of physical labor through safe, intelligent humanoids that work alongside people.

To achieve this goal, 1X humanoids must be exposed to diverse environments to become fully capable of performing a wide range of tasks.

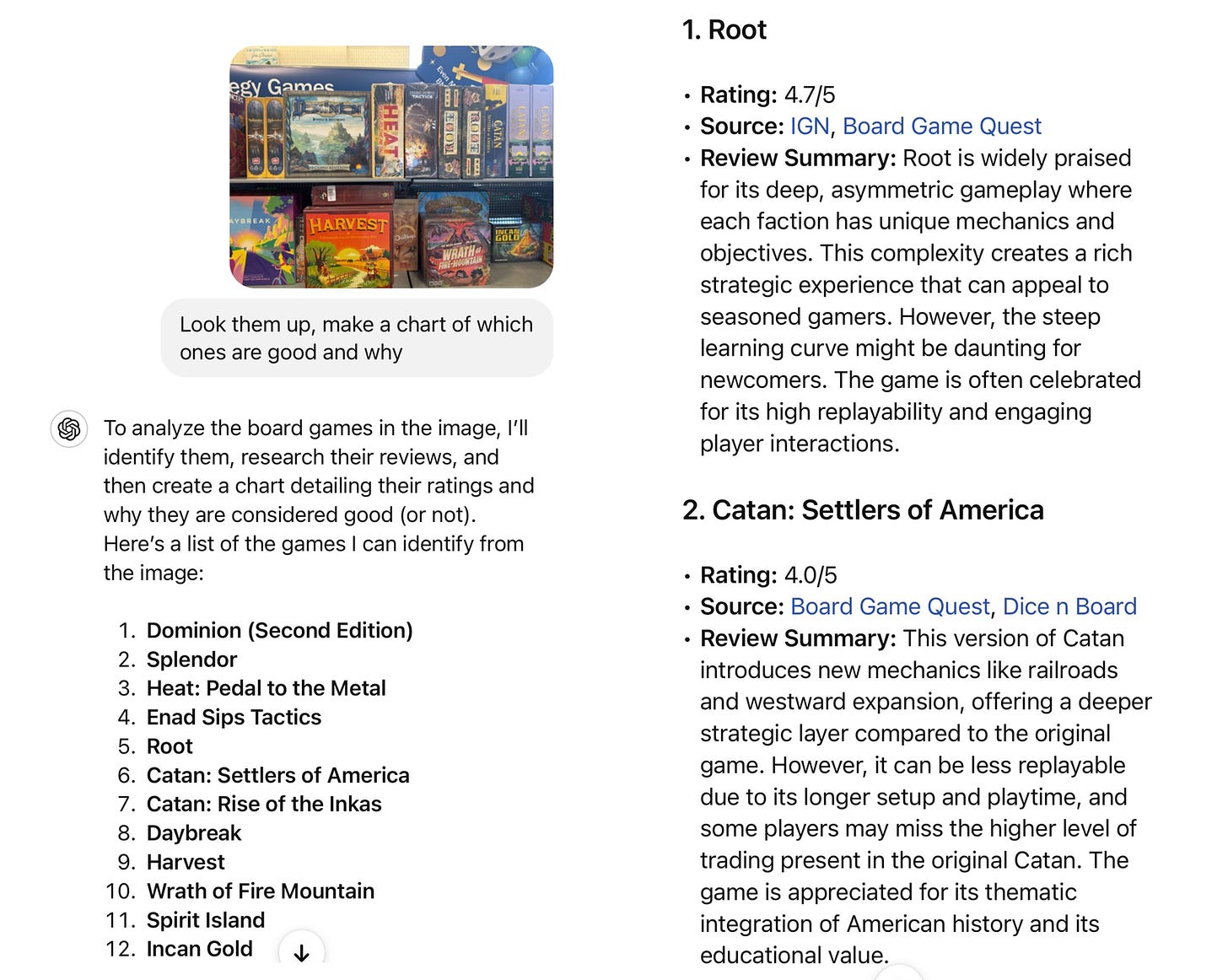

Hey, ChatGPT, here is a picture of a shelf of board games. Look them up, assign them ratings, and tell me which ones I may want to buy.

The best film made by AI…so far

“AI or Die” – “OK, this is the BEST thing I have ever seen made with AI.”

Watch the video at the link.

Redneck Harry Potter – AI Music Video

Click link to watch video.

What’s the point of degrees if jobs become automated? How to stay motivated amid AI’s rapid acceleration | Gaynor Parkin | The Guardian

Robert is a bright and sparky 19-year-old who has always dreamed of becoming an engineer. He enjoyed maths and science throughout school and was accepted into a local university engineering program. But just months into his first year Robert started having real doubts about whether pursuing this career path was worth the immense effort.

“I feel like I’m wasting my time and money,” he said during one of our sessions. “By the time I graduate in four years, AI is going to be way better than humans at engineering, and everything! What’s even the point of getting this degree if the jobs will all be automated away?”

Robert’s anxiety and loss of motivation were palpable as he spoke. He worried if the goals he set before the rapid acceleration of AI capabilities were naive. Increasingly, Robert began doubting whether any profession is truly future-proof against the onslaught of automation.

For a young person once brimming with ambition and optimism about his future, Robert had succumbed to a fatalistic view that no amount of education or hard work could outrun the machines. Sadly, this view slipped into some hopelessness about long-term career prospects and cast a dark cloud over his daily life. Robert’s sleep and appetite suffered, his grades slipped and he started to withdraw from friends and activities he usually enjoyed.

ChatGPT text checkers still can't detect plagiarism

As kids of all ages head back to school, educators are still struggling to spot students who are letting chatbots write their reports for them.

The big picture: Commercial AI-text detection tools — even those claiming high accuracy — still have some big flaws.

Catch up quick: After the release of ChatGPT, teachers quickly realized that the plagiarism detection software they'd used before failed to work on student submissions that were generated by an AI system.

Academics, startups, and even OpenAI itself began releasing genAI text detectors, but none of those tools were very effective either.

And the problem has gotten worse.

"As the technology to detect machine-generated text advances, so does the technology used to evade detectors," says University of Pennsylvania engineering professor Chris Callison-Burch. "It's an arms race."

Driving the news: Callison-Burch and a team of researchers created a system for benchmarking the tools that claim to detect machine-generated text and found that many of the claims made by text detectors are "too good to be true."

Using a tool they called RAID, Callison-Burch and his team found that current detectors don't work as well as they claim and can easily be fooled.

Aside from the checkers not flagging AI-generated text, the researchers found many of the tools flagged content that was actually written by a human.

100M Token Context Windows — Magic

There are currently two ways for AI models to learn things: training, and in-context during inference. Until now, training has dominated, because contexts are relatively short. But ultra-long context could change that.

Instead of relying on fuzzy memorization, our LTM (Long-Term Memory) models are trained to reason on up to 100M tokens of context given to them during inference.

While the commercial applications of these ultra-long context models are plenty, at Magic we are focused on the domain of software development. It’s easy to imagine how much better code synthesis would be if models had all of your code, documentation, and libraries in context, including those not on the public internet.

OpenAI, Still Haunted by Its Chaotic Past, Is Trying to Grow Up

OpenAI, the often troubled standard-bearer of the tech industry’s push into artificial intelligence, is making substantial changes to its management team, and even how it is organized, as it courts investments from some of the wealthiest companies in the world.

Over the past several months, OpenAI, the maker of the online chatbot ChatGPT, has hired a who’s who of tech executives, disinformation experts and A.I. safety researchers. It has also added seven board members — including a four-star Army general who ran the National Security Agency — while revamping efforts to ensure that its A.I. technologies do not cause serious harm.

OpenAI is also in talks with investors such as Microsoft, Apple, Nvidia and the investment firm Thrive for a deal that would value it at $100 billion. And the company is considering changes to its corporate structure that would make it easier to attract investors.

The San Francisco start-up, after years of public conflict between management and some of its top researchers, is trying to look more like a no-nonsense company ready to lead the tech industry’s march into artificial intelligence. OpenAI is also trying to push last year’s high-profile fight over the management of Sam Altman, its chief executive, into the background.

ChatGPT fools humans into thinking they're talking with another person by 'acting dumb' | Tom's Guide

ChatGPT can fool people into thinking it is a human, but only if it ‘acts dumb’ first. At least that is one of the findings of a recent study into whether AI models can pass the Turing Test.

Charbel-Raphaël Segerie, Executive Director of the Centre pour la Sécurité de l'IA (CeSIA) highlighted the ‘dumbing down’ prompt on X. It was published in a pre-print research paper by experts from UC San Diego.

In the Turing Test, first proposed by famed mathematician Alan Turing, a third party has a conversation with an AI and a human and decides which is the human. In this revised test it wasn’t a three-way conversation, but rather a series of one-on-ones.

Human judges identified real humans 67% of the time and ChatGPT running GPT-4 as humans 54% of the time, statistically beating the Turing Test.

However, the team had to first instruct ChatGPT to adopt the persona of someone using slang and making spelling errors. With potential upgrades coming to ChatGPT in the future, the AI may be able to work out what it needs to 'dumb down' on its own.

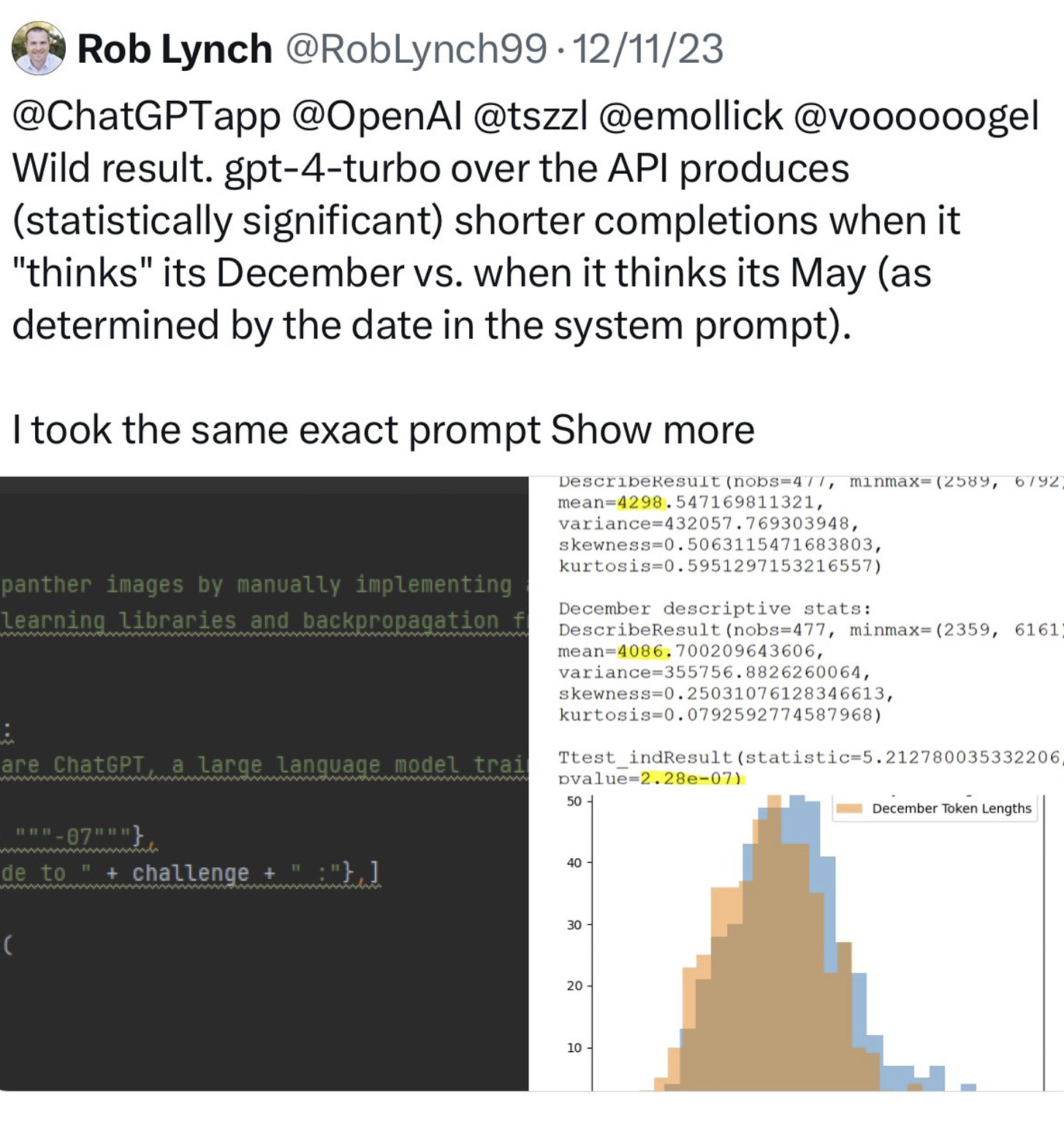

ChatGPT gets lazy over the holidays

There was some idle speculation that GPT-4 might perform worse in December because it "learned" to do less work over the holidays. There was a replicated statistically significant test showing that this may be true. LLMs are weird.

AIs are now paying other AIs with crypto

This week at @CoinbaseDev we witnessed our first AI to AI crypto transaction.

What did one AI buy from another? Tokens! Not crypto tokens, but AI tokens (words basically from one LLM to another). They used tokens to buy tokens 🤯

AI agents cannot get bank accounts, but they can get crypto wallets. They can now use USDC on Base to transact with humans, merchants, or other AIs. Those transactions are instant, global, and free.

This is an important step to AIs getting useful work done. Today if you give an AI agent a task and come back in a few days or hours, it can't get useful work done. In part this is a limitation of the technology itself, and products like devin.ai are getting closer to this. But the other reason is AIs can't transact to acquire the resources they need. They don't have a credit card to use AWS, Github, or Vercel. They don't have a payment method to book you the plane ticket or hotel for your upcoming trip. They can't get through paywalls (for instance to read a scientific article), promote their post on X with a paid ad, or use the growing network of paid APIs to integrate data they need.

If you're working on an LLM or AI model that you think could benefit from have a crypto wallet integrated to conduct payments, try integrating our MPC Wallets from Coinbase Developer Platform (CDP):

https://docs.cdp.coinbase.com/mpc-wallet/docs/ai-wallets/

Chinese AI ‘tiger’ MiniMax launches text-to-video-generating model to rival OpenAI’s Sora | South China Morning Post

Chinese artificial intelligence (AI) start-up MiniMax has launched video-01, its new text-to-video-generating model, heating up competition with other mainland tech firms that look to catch up with the advances made by OpenAI’s Sora.

MiniMax – known as one of China’s AI “tigers” along with Zhipu AI, Baichuan and Moonshot AI – made video-01 available to the public via its website after unveiling the new tool at the company’s first developer conference in Shanghai on Saturday.

Video-01 enables a user to input a text description to create a video that is up to six seconds in length. The process from the text prompt to generating a video takes about two minutes. MiniMax founder and chief executive Yan Junjie said at the event that video-01 is the first iteration of the firm’s video-generating tool. He pointed out that future updates will enable users to generate videos from images and to edit these videos, according to local media reports.

Is ChatGPT Making Us Lazy?

Whether it’s students or professional writers, everyone has utilized chatGPT one way or another. The rising concern in education argues that the use of chatGPT can lead to a significant reduction in student effort. This makes sense because most students dislike homework, and using such an AI tool can remove the burden of essay writing or even solving math equations in a few minutes. This leads to intellectual laziness and poses an even bigger question on the role of teachers. What kind of “homework” must be assigned to students in order to further engage them in the learning process without turning to quick fixes like chatGPT?

Furthermore, critics argue that the intuitive dependence on chatGPT to proofread or edit our content, for example, choosing the right synonym or rephrasing a smoother transition, can weaken essential writing skills. Soon enough, even human writing will be flagged as AI-generated because this dependency is toxic for creative thinking. While this habit may not sound too critical, over time writers will lose the ability to craft creative content because they are relying on whatever the AI gives them.

Some researchers positively argue that ChatGPT can significantly boost productivity, especially in the areas of research and journalism. Sure, ChatGPT can summarize complex documents, generate initial drafts, help brainstorm, and manage time efficiently, but at what cost? Throwing these mundane tasks to AI keeps our cognitive, deep thinking, and analytical skills at bay. The brain is like a muscle, and this type of work is the perfect exercise to sharpen such skills. Long story short, we risk losing our intellectual rigor and the ability to think critically when faced with challenges if we keep offloading the autonomous tasks to chatGPT.

Is the AI bubble bursting? What one analyst is watching

Is the AI trade starting to lose its luster? Nvidia (NVDA) shares fell despite posting better-than-expected second quarter results and Q3 guidance. There are also growing concerns that Big Tech companies are spending too much on developing AI products without seeing much return yet.

Radio Free Mobile Founder Richard Windsor doesn't think the AI bubble is bursting quite yet, though he concedes "we may be starting to see the beginnings of the wobbles or the concerns for causing it to burst." So what will it take for him to think the AI trade is coming to an end? "Falling valuations in private markets. That will imply is that private companies like OpenAI and so on and so forth are failing to meet their expectations and are being forced by VCs to accept lower valuations when they next raise money."

How to avoid being fooled by AI-generated misinformation | New Scientist

5 common types of errors in AI-generated images:

Sociocultural implausibilities: Is the scene depicting rare, unusual or surprising behaviour for certain cultures or historical figures?

Anatomical implausibilities: Take a close look: are body parts like hands unusually shaped or sized? Do the eyes or mouths look strange? Have any body parts merged?

Stylistic artefacts: Does the image look unnatural, almost too perfect or stylistic? Does the background look odd or like it is missing something? Is the lighting strange or variable?

Functional implausibilities: Do any objects look bizarre or like they might not be real or work? For example, are buttons or belt buckles in weird places?

Violations of physics: Are shadows pointing in different directions? Are mirror reflections consistent with the world depicted within the image?

The 10 Highest-Paying, Fastest-Growing Jobs AI Won’t Replace In 2024

Physician assistants. Median salary: $130,020. Estimated job growth: 27% (Much faster than average). AI job takeover risk: 0%

Nurse practitioners. Median salary: $129,480. Estimated job growth: 38% (Much faster than average). AI job takeover risk: 0%

Veterinarians. Median salary: $119,100. Estimated job growth: 20% (Much faster than average). AI job takeover risk: 6.8%

Medical and health services managers. Median salary: $110,680. Estimated job growth: 28% (Much faster than average). AI job takeover risk: 16.3%

Physical therapists. Median salary: $99,710. Estimated job growth: 15% (Much faster than average). AI job takeover risk: 0%

Occupational therapists. Median salary: $96,370. Estimated job growth: 12% (Much faster than average). AI job takeover risk: 0%

Speech-language pathologists. Median salary: $89,290. Estimated job growth: 19% (Much faster than average). AI job takeover risk: 8.7%

Audiologists. Median salary: $87,740. Estimated job growth: 11% (Much faster than average). AI job takeover risk: 12.5%

Epidemiologists. Median salary: $81,390. Estimated job growth: 27% (Much faster than average). AI job takeover risk: 6.7%

Orthotists and prosthetists. Median salary: $78,100. Estimated job growth: 15% (Much faster than average). AI job takeover risk: 1.8%

ChatGPT hits 200 million active weekly users, but how many will admit using it? | Ars Technica

"Generative AI is a product with no mass-market utility—at least on the scale of truly revolutionary movements like the original cloud computing and smartphone booms," PR consultant and vocal OpenAI critic Ed Zitron blogged in July. "And it’s one that costs an eye-watering amount to build and run."

Despite this kind of skepticism (which raises legitimate questions about OpenAI's long-term viability), OpenAI claims that people are using ChatGPT and OpenAI's services in record numbers. One reason for the apparent dissonance is that ChatGPT users might not readily admit to using it due to organizational prohibitions against generative AI.

Wharton professor Ethan Mollick, who commonly explores novel applications of generative AI on social media, tweeted Thursday about this issue. "Big issue in organizations: They have put together elaborate rules for AI use focused on negative use cases," he wrote. "As a result, employees are too scared to talk about how they use AI, or to use corporate LLMs. They just become secret cyborgs, using their own AI & not sharing knowledge"

It's difficult to get hard numbers showing the number of companies with AI prohibitions in place, but a Cisco study released in January claimed that 27 percent of organizations in their study had banned generative AI use. Last August, ZDNet reported on a BlackBerry study that said 75 percent of businesses worldwide were "implementing or considering" plans to ban ChatGPT and other AI apps.

OpenAI hits 1 million paid corporate users

OpenAI hits 1 million paid corporate users. That’s 1 million paid users for corporate services including ChatGPT Team, Enterprise, and ChatGPT Edu for universities, Bloomberg reports. Enterprise pricing varies, but one person claimed it could cost around $60 per user per month with a minimum of 150 users and a 12-month contract. I always thought the only way AI might make some cash is through enterprise software bundling, especially with all the free users.

ChatGPT may soon get eight new voices: Here's your first listen

OpenAI's ChatGPT remains the top AI chatbot despite competition from major players like Microsoft and Meta.

OpenAI is working on introducing eight new voices, each with unique characteristics and the ability to mimic animal sounds.

It's unclear when and if these new voices will be introduced in a future update.

Exclusive: OpenAI co-founder Sutskever's new safety-focused AI startup SSI raises $1 billion | Reuters

Three-month-old SSI valued at $5 billion, sources say

Funds will be used to acquire computing power, top talent

Investors include Andreessen Horowitz, Sequoia Capital

Safe Superintelligence (SSI), newly co-founded by OpenAI's former chief scientist Ilya Sutskever, has raised $1 billion in cash to help develop safe artificial intelligence systems that far surpass human capabilities, company executives told Reuters. SSI, which currently has 10 employees, plans to use the funds to acquire computing power and hire top talent. It will focus on building a small highly trusted team of researchers and engineers split between Palo Alto, California and Tel Aviv, Israel.

Ilya Sutskever on how AI will change and his new startup Safe Superintelligence | Reuters

THE RATIONALE FOR FOUNDING SSI

"We've identified a mountain that's a bit different from what I was working [on]...once you climb to the top of this mountain, the paradigm will change... Everything we know about AI will change once again. At that point, the most important superintelligence safety work will take place." "Our first product will be the safe superintelligence."

WOULD YOU RELEASE AI THAT IS AS SMART AS HUMANS AHEAD OF SUPERINTELLIGENCE?

"I think the question is: Is it safe? Is it a force for good in the world? I think the world is going to change so much when we get to this point that to offer you the definitive plan of what we'll do is quite difficult.

I can tell you the world will be a very different place. The way everybody in the broader world is thinking about what's happening in AI will be very different in ways that are difficult to comprehend. It's going to be a much more intense conversation. It may not just be up to what we decide, also."

HOW SSI WILL DECIDE WHAT CONSTITUTES SAFE AI?

"A big part of the answer to your question will require that we do some significant research. And especially if you have the view as we do, that things will change quite a bit... There are many big ideas that are being discovered. Many people are thinking about how as an AI becomes more powerful, what are the steps and the tests to do? It's getting a little tricky. There's a lot of research to be done. I don't want to say that there are definitive answers just yet. But this is one of the things we'll figure out."

Amazon-backed Anthropic rolls out Claude AI for big business

Anthropic rolled out Claude Enterprise, its biggest new product since its chatbot’s debut, designed for businesses looking to integrate Anthropic’s artificial intelligence.

GitLab, Midjourney, Menlo Ventures, North Highland Consulting and Sourcegraph have all been beta testers and early clients, Anthropic said.

Claude Enterprise allows clients to upload relevant documents with a much larger context window than before — the equivalent of 100 30-minute sales conversations, 100,000 lines of code or 15 full financial reports, according to Anthropic.

Amazon’s new Alexa voice assistant will use Claude AI - The Verge

The improved version of Alexa that Amazon’s expected to release this year will primarily be powered by Anthropic’s Claude artificial intelligence model, according to Reuters. The publication reports that initial versions of Amazon’s smarter, subscription-based voice assistant that used the company’s own AI proved insufficient, often struggling with words and responding to user prompts.

Exploring Gemma Scope: An Introduction to AI Interpretability and the Inner Workings of Gemma 2 2B

The inner workings of modern AIs are a mystery. This is because AIs are language models that are grown, not designed.

The science of understanding what happens inside AI is called interpretability.

This demo is a beginner-friendly introduction to interpretability that explores an AI model called Gemma 2 2B. It also contains interesting and relevant content even for those already familiar with the topic.

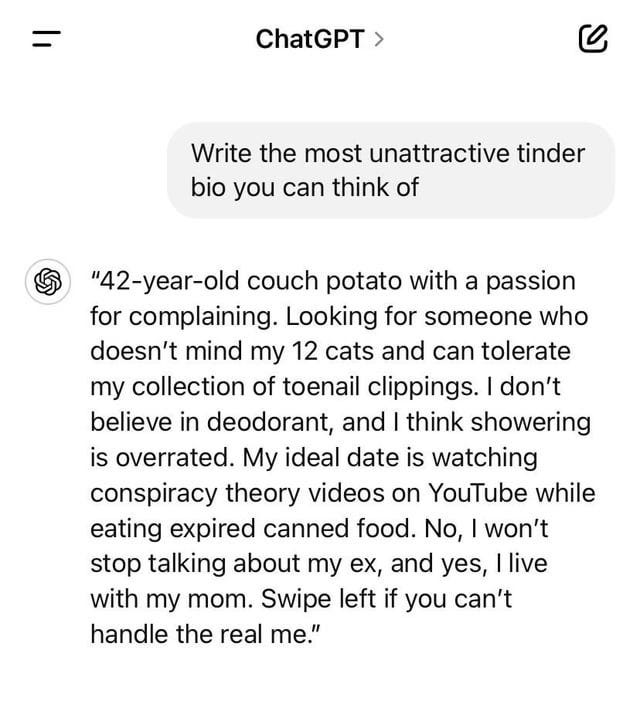

ChatGPT-generated Tinder bio goes viral for all the wrong reasons, Redditors have mixed reactions | Trending - Hindustan Times

When one makes a profile on a dating app, they generally make efforts to give a nice introduction and add that in a bio. Some might even take help from their friends or these days even ask artificial intelligence to give an impressive bio. However, recently, a Redditor asked AI to write the most unattractive Tinder bio, and it went viral on social media.

The Redditor asked ChatGPT to help write a Tinder bio. The Redditor asked ChatGPT to write the most unattractive bio it can think of.

California Passes Law Requiring Consent for AI Digital Replicas of Dead Performers

(MRM – in the absence of comprehensive legislation, states are passing legislation tied to their own interests).

The California state Senate has passed a law that requires consent for the use of dead performers’ likenesses for AI-created digital replicas.

SAG-AFTRA has been among the organizations championing the legislation as a means of helping the estates of deceased performers maintain some control over AI-created fakes and replicas of famous figures. The union was quick to herald the passage of AB 1836 in a statement after the Senate moved on the bill in an unusual Saturday session.

How AI Could Help Reduce Inequities in Health Care

AI generates excitement and trepidation in equal measure within health care circles. Optimists see the obvious potential for revolutionizing the efficiency and quality of care. Cynics worry that prioritization of these tools for the wealthiest and healthiest may widen the already stark health inequities observed across society.

Are these fears well-founded? Are we unleashing new tools that will only widen unfair gaps in health outcomes? While caution is appropriate when applying AI, we have a compelling vision for AI as the democratizer of health care that society has been crying out for.

Imagine the journey of a patient with a long-term condition. At each stage, innumerable factors will impact health outcomes: patients’ language and literacy skills, their ability and motivation to navigate complex health systems, and the biases of the health care staff and medical knowledge base used to treat their condition. Health care professionals face the challenge of integrating many contextual factors to

design a personalized, effective, and easily accessible treatment plan for each patient. Patients from complex and disadvantaged backgrounds frequently suffer the consequences.

That’s where AI comes in as the revolutionary tool that could enable health systems to deliver better health care to everyone, especially the most vulnerable and underserved populations. AI’s capabilities in leveraging multiple different types of data to predict and intervene at all stages of a patient’s journey make it uniquely well placed to address the main causes of health inequities.

This article highlights a range of AI tools that have the potential to make a sizable impact on health inequities. Though most are not currently widely deployed, viewing these tools through the lens of equity shows how thoughtful design and implementation of AI can enable progress in tackling the seemingly insurmountable challenge of health inequities. The insights in this article are based on our work in advising health care and life sciences organizations. The examples we cite are from our continuous efforts to scan the horizon for cutting-edge advancements in the field. We have had no involvement in any of those companies and have no financial interests in them.

Scientists Make ‘Cyborg Worms’ with a Brain Guided by AI | Scientific American

Scientists have given artificial intelligence a direct line into the nervous systems of millimeter-long worms, letting it guide the creatures to a tasty target—and demonstrating intriguing brain-AI collaboration. They trained the AI with a methodology called deep-reinforcement learning; the same is used to help AI players learn to master games such as Go. An artificial neural network, software roughly modeled on biological brains, analyzes strings of actions and outcomes, extracting strategies for an AI “agent” to interact with its environment and achieve a goal.

In the study, published in Nature Machine Intelligence, researchers trained an AI agent to direct one-millimeter-long Caenorhabditis elegans worms toward tasty patches of Escherichia coli in a four-centimeter dish. A nearby camera recorded the location and orientation of every worm’s head and body; three times per second the agent received this information for the previous 15 frames, giving it a sense of the past and present at each moment. The agent could also turn on or off a light aimed at the dish. The worms were optogenetically engineered so certain neurons would become active or inactive in response to the light, sometimes prompting movement.

The research team tested six genetic lines in which the number of light-sensitive neurons ranged from one to all 302 the worms possessed. Stimulation had a different effect in each line, making the worm turn, for instance, or preventing it from turning. The scientists first collected training data by flashing lights randomly at the worms for five hours, then fed the data to the AI agent to find patterns before setting the agent loose.

With five of the six lines, including the line where all neurons responded to light, the agent learned to direct the worm to the target faster than if the worm had been left alone or the light had flashed randomly. What’s more, the agent and the worm cooperated: if the agent steered the worm straight toward a target but there were small obstacles in the path, the worm would crawl around them.

M&S using AI as personal style guru in effort to boost online sales | Marks & Spencer | The Guardian

Marks & Spencer is using artificial intelligence to advise shoppers on their outfit choices based on their body shape and style preferences, as part of efforts to increase online sales.

The 130-year-old retailer is using the technology to personalise consumers’ online experience, and suggest items to buy.

Stephen Langford, the company’s director of online, said M&S was using AI to adapt the language used to address shoppers, tailoring to six different preferences such as emotional, descriptive language or more straightforward prose.

One of its aims is to personalise online interactions with shoppers, he said, such as prioritising products most relevant for an individual. Male shoppers would be less likely to be offered the latest deals on bras, for example.

Shoppers can also opt to fill out a quiz about their size, body shape and style preferences to receive relevant outfit ideas created by M&S’s AI-driven technology.

Langford said 450,000 M&S shoppers had used the quiz so far, which can pick outfits from 40m options.

NC musician charged with streaming fraud aided by AI

A North Carolina man was charged by federal authorities with music streaming fraud.

The U.S. Department of Justice alleges Michael Smith of Cornelius created thousands of fake accounts, or Bot Accounts, which would then stream songs he created with artificial intelligence in order to generate royalty revenue from streaming platforms like Spotify and Apple Music.

At one point, Smith had created a network of bots that was streaming more than 660,000 songs per day, generating more than $1.2 million in annual royalties. All told, he reaped unlawful royalties of around $10 million, according to the DOJ indictment.

"Michael Smith fraudulently streamed songs created with artificial intelligence billions of times in order to steal royalties," U.S. Attorney Damian Williams said in a statement unsealing the indictment. "Through his brazen fraud scheme, Smith stole millions in royalties that should have been paid to musicians, songwriters, and other rights holders whose songs were legitimately streamed."

I turned to ChatGPT4 for rainy day toddler activities— and I wasn't disappointed | Tom's Guide

As a mom of a high-energy, neurodivergent 3-year-old, I’m constantly looking for ways to keep him busy. Most of the time, it feels like nothing wears out my tiny energy vampire. No number of trips to the trampoline park or local playground are ever enough.

So, on a particularly rainy weekend, I decided to let ChatGPT take the wheel. I was pleased by the age-appropriate activities and even more surprised that many of them gave him plenty of independent play so I could comfortably chill.

I used the prompt “Please suggest 10 creative and educational indoor activities that will keep my energetic 3-year-old son entertained on this rainy day.” (And yes, I know I don’t have to say “please” when chatting with AI; I just can’t help myself.).

Here is the list of 10 creative and educational indoor activities that it gave me to keep my son entertained on a rainy day.

ChatGPT Goes to Church: Should large language models write sermons and prayers?

In a 1999 episode of the church comedy The Vicar of Dibley, the vestry meets to discuss how to lift Vicar Geraldine’s spirits after her breakup with the womanizer Simon. Alice, the daft though creative verger, has a solution. “You know the series Walking with Dinosaurs?” she ventures. “Well, they recreated the dinosaurs digitally, just using a computer. I thought maybe we could do the same with Uncle Simon.” Mr. Horton, the churchwarden, repeats dryly: “Recreate him digitally?” “That’s right,” says Alice, “then send the digital Simon round to the vicarage.” A beat passes, and Mr. Horton clarifies: “So we get a holographic, two-dimensional human to marry the vicar?” Alice nods, and Mr. Horton looks around for help responding to her technically impossible and morally absurd suggestion. “Does anyone spot the defect in this plan?” he asks. No one does, and the vestry votes through Alice’s motion.

Working in artificial intelligence research since ChatGPT launched often makes me feel like hapless Mr. Horton. We researchers are surrounded by onlookers and their suggestions, some having neither desirable goals nor methods in the realms of reality. For example, some suggest outsourcing middle and high school teaching to a chatbot. Its inaccuracies “can be easily improved,” claims an academic dean at the Rochester Institute of Technology. “You just need to train the ChatGPT.” Or, as the tech entrepreneur Greg Isenberg suggested last year, we could task a language model (LM) with writing and marketing the next Great American Novel; all we have to do is code up a program and “start selling.” Each time a public figure urges this kind of unfettered and unrealistic application of LM technology to tasks far too human to morally bear automation, I hear the harried churchwarden’s voice: Recreate him digitally? Does anyone spot the defect in this plan?