The New News in AI in Business: 7/19/24

The latest AI news and info on ChatGPT and other tools. Enjoy!

New EY research finds AI investment is surging, with senior leaders seeing more positive ROI as hype continues to become reality | EY - US

After more than a year of hype around generative AI’s potential, business leaders report that they are already seeing a return on their artificial intelligence (AI) investments and plan to increasingly become more bullish, according to new data from Ernst & Young LLP (EY US). Among the 95% of senior leaders who report that their organizations are currently investing in AI, the number of companies investing $10 million or more in the technology is set to nearly double next year to 30%, up from 16% currently investing at that level. However, despite the forecasted investment boom, the survey also found that many leaders are ignoring the foundational functions AI needs to thrive.

The new EY AI Pulse Survey is the first in a series that asked 500 US senior leaders across industries about their AI technology investments, impacts and challenges. As leaders look to create sustainable momentum toward full-scale AI adoption, the study finds that senior leaders whose organizations are investing in AI are seeing tangible impact across business functions, including about three-quarters who are experiencing positive ROI on:

Operational efficiencies (77%)

Employee productivity (74%)

Customer satisfaction (72%)

What if the A.I. Boosters Are Wrong? - The New York Times

Despite the advent of personal computers, the internet and other high-tech innovations, much of the industrialized world is stuck in an economic growth slump, with O.E.C.D. countries expected to expand on aggregate just 1.7 percent this year. Economists sometimes call this phenomenon the productivity paradox.

The big new hope is that artificial intelligence will snap this mediocrity streak — but doubts are creeping in. And one especially skeptical paper by Daron Acemoglu, a labor economist at M.I.T., has triggered a heated debate.

Acemoglu concluded that A.I. would contribute only “modest” improvement to worker productivity, and that it would add no more than 1 percent to U.S. economic output over the next decade. That pales in comparison to estimates by Goldman Sachs economists, who predicted last year that generative A.I. could raise global G.D.P. by 7 percent over the same period.

The bullish camp has great hopes for A.I. Sam Altman of the ChatGPT maker OpenAI sees A.I. wiping out poverty. Jensen Huang, the C.E.O. of Nvidia, the dominant maker of the chips used to power A.I., says the technology has ushered in “the next industrial revolution.” But if the boosters are wrong, it could be trouble for the developed world, which is in desperate need of a productivity breakthrough as its work force ages and declines.

Goldman Sachs says AI is too expensive and unreliable — firm asks if 'overhyped' AI processing will ever pay off massive investments | Tom's Hardware

Corporations and investors have been spending billions of dollars on building AI. The current LLM models we use today, like GPT-4o, already cost hundreds of millions of dollars to train, and the next-generation models are already underway, going up to a billion dollars. However, Goldman Sachs, one of the leading global financial institutions, is asking whether these investments will ever pay off.

Sequoia Capital, a venture capital firm, recently examined AI investments and computed that the entire industry needs to make $600 billion annually just to break even on its initial expenditure. So, as massive corporations like Nvidia, Microsoft, and Amazon are spending huge amounts of money to gain a leg up in the AI race, Goldman Sachs interviewed several experts to ask whether investments in AI will actually pay off.

The expert opinions in the Goldman Sachs report are currently divided into two groups: one group is skeptical about its group, saying that AI will only deliver limited returns to the American economy and that it won’t solve complex problems more economically than current technologies. On the other hand, the opposing view says that the capital expenditure cycle on AI technologies seems promising and is similar to what prior technologies went through.

More than 40% of Japanese companies have no plan to make use of AI | Reuters

Nearly a quarter of Japanese companies have adopted artificial intelligence (AI) in their businesses, while more than 40% have no plan to make use of the cutting-edge technology, a Reuters survey showed on Thursday. The survey, conducted for Reuters by Nikkei Research, pitched a range of questions to 506 companies over July 3-12 with roughly 250 firms responding, on condition of anonymity.

About 24% of respondents said they have already introduced AI in their businesses and 35% are planning to do so, while the remaining 41% have no such plans, illustrating varying degrees of embracing the technological innovation in corporate Japan.

Why older workers are critical to AI adoption in the office

Despite the stereotype that older workers have a harder time adapting to new technology, these are the workers for whom AI has unique advantages.

Still, 30% of senior-level employees fear they’ll be fired for lacking AI skills, according to a recent report from online tutoring company Preply.

Someone with a more complex understanding of business is more effective at applying inputs and assessing outputs using knowledge and skills that AI has not mastered.

Managing AI Employees – Marginal Revolution

Lattice is a platform for managing employees. I believe this announcement from the Lattice CEO is real:

Today, Lattice made history: We became the first company to give digital workers official employee records in Lattice. This marks a huge moment in the evolution of A1 technology and of the workplace. It takes the idea of an “AI employee” from concept to reality — and marks the start of a new journey for Lattice, to lead organizations forward in the responsible hiring of digital workers. Within Lattice’s people platform, AI employees will be securely onboarded, trained, and assigned goals, performance metrics, appropriate systems access, and even a manager. We know this process will raise a lot of questions and we don’t yet have all the answers, but we want to help our customers find them. So we will be breaking ground, bending minds, and leaning into learning. And we want to bring everyone along on this journey with us.

On the one hand, this is wild. On the other hand, it makes perfect sense to use our current system of managing employees–performance reviews, training, feedback, yearly bonuses and so on–to manage AIs. In essence, human management systems become the interface for AI workers. Every team will soon include AI workers. At first, this will be the AI stenographer who keeps the minutes, the admin who reminds everyone of their tasks, and the PowerPoint designer who puts together presentations but quickly this will evolve and some AIs will naturally take on supervisory roles until, my boss is an AI, will not seem strange.

How AI and automation will reshape grocery stores and fast-food chains

While U.S. consumers facing continued food inflation hunt for deals and shift their spending habits accordingly, the food industry is working to stay competitive by investing in artificial intelligence to help curb high labor operating costs and reduce prices on some items. For example, fast-food chains like McDonald’s, Taco Bell and Wendy’s have reintroduced value menus. And big-box retailers Walmart and Target have lowered the price of certain grocery goods.

“It’s very difficult in this environment to engineer great profits, great sales and to keep customers satisfied,” said Neil Saunders, GlobalData’s managing director and retail analyst. “It’s a very difficult equation to balance. And I think until the economy is on a different footing, it’s not going to be balanced completely. That’s the reality of it.“

Amid this tough economic backdrop, McDonald’s announced its plan this year to spend $2 billion into employing AI and robots into restaurant and drive-thrus. And in 2022, grocery stores spent $13 billion on tech automations, according to research by FMI, The Food Industry Association. FMI expects spending on innovations like smart carts and revamped self-checkout aisles to soar 400% through 2025.

“We see a lot of upside over the next several years, with AI and technology being able to enhance customer experience while making the team members’ jobs a lot easier, ” said Joe Park, Yum Brands’ chief digital and technology officer.

Generative AI models dominate workplaces as ChatGPT, Gemini gain more popularity

Generative artificial intelligence (AI) models are getting more prevalent in workplaces day by day, with ChatGPT and Google Gemini chatbots becoming the most popular, according to a study in 2024. The use of AI has become much more widespread in today’s world, from conducting simple tasks at work or at one’s leisure to generating content for entertainment.

The US-based artificial intelligence firm OpenAI’s ChatGPT grew to be a staple, as the chatbot became the most widely used at workplaces, according to the “Top 100 Generative AI for Work Tools” list on the flexos.work website.

ChatGPT reigned the list, beating Google’s Gemini, formerly known as Bard, which came in second place, with Canva AI Suite, the generative tool for the online graphic design platform, coming third. The fourth place in the list was taken by Quillbot, the AI writing companion, while Perplexity AI, the research chatbot, took the fifth place in the most used AI tools at work. GitHub Copilot, the AI-powered code completion tool, came in sixth place, followed by the image generation tool Leonardo AI, the writing companion Grammarly AI, and Midjourney, the generative AI tool. The list revealed that the most used tools listed were generative pre-trained transformers, also known as GPT, with a share of 70%.

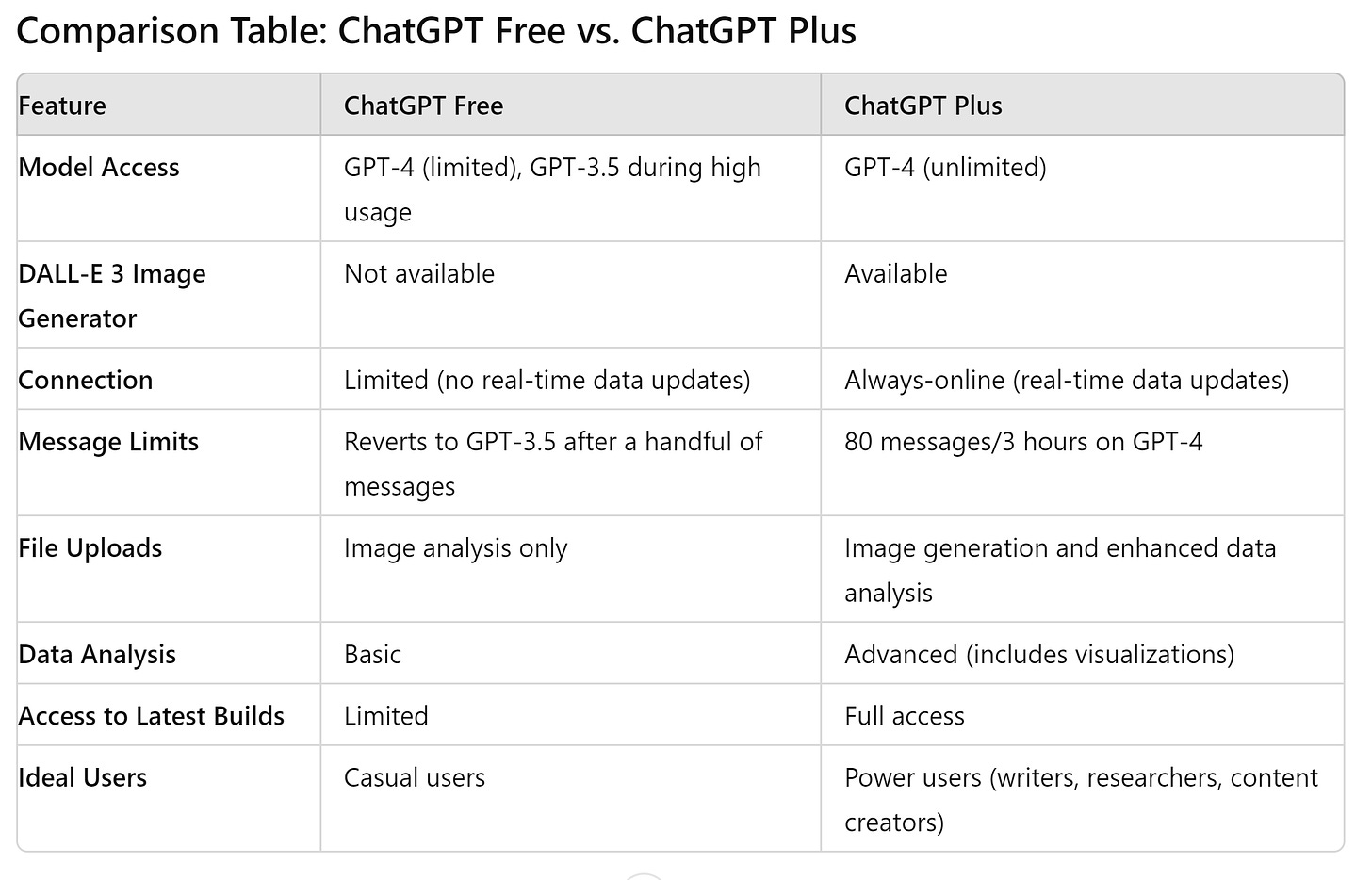

ChatGPT Free vs. ChatGPT Plus: Worth the $20 Upgrade?

MRM – here’s a summary ChatGPT made of this article.

When it comes to choosing between the free and paid versions of ChatGPT, many users find themselves questioning the value of a subscription. Both versions offer access to the latest GPT-4 model, known for its speed and accuracy. However, ChatGPT Free can occasionally revert to the older GPT-3.5 model during high traffic or excessive usage, and lacks some features available in the paid version. For most casual users, the differences may not justify the $20 monthly fee. Let's take a closer look at the features and benefits of ChatGPT Free versus ChatGPT Plus.

ChatGPT Free is a robust tool that meets the needs of most users, offering reliable and accurate responses with occasional limitations. ChatGPT Plus, on the other hand, provides enhanced capabilities such as unlimited GPT-4 access, real-time data updates, and advanced features like the DALL-E 3 image generator and enhanced data analysis. Custom GPTs in the Plus version offer full customization options, allowing users to tailor the AI to specific needs and preferences. While the free version suffices for general use, the paid version is geared towards professionals who require consistent, high-quality performance and additional functionalities.

OpenAI releases levels of artificial general intelligence (AGI)

AI experts disagree over whether today's large language models, which excel at generating text and images, will ever be capable of broadly understanding the world and flexibly adapting to novel information and circumstances.

Driving the news: OpenAI has internally shared definitions for five levels of artificial general intelligence (AGI), according to Bloomberg. An OpenAI document Bloomberg reproduced defines the levels:

Chatbots: AI with conversational language

Reasoners: human-level problem-solving

Agents: systems that can take actions

Innovators: AI that can aid in invention

Organizations: AI that can do the work of an organization

State of play: At a company meeting last week, per Bloomberg, OpenAI leaders told staff their systems currently worked at level 1 but were "on the cusp" of achieving level 2.

What exactly is an AI agent?

AI agents are supposed to be the next big thing in AI, but there isn’t an exact definition of what they are. To this point, people can’t agree on what exactly constitutes an AI agent. At its simplest, an AI agent is best described as AI-fueled software that does a series of jobs for you that a human customer service agent, HR person or IT help desk employee might have done in the past, although it could ultimately involve any task. You ask it to do things, and it does them for you, sometimes crossing multiple systems and going well beyond simply answering questions.

Seems simple enough, right? Yet it is complicated by a lack of clarity. Even among the tech giants, there isn’t a consensus. Google sees them as task-based assistants depending on the job: coding help for developers; helping marketers create a color scheme; assisting an IT pro in tracking down an issue by querying log data.

For Asana, an agent may act like an extra employee, taking care of assigned tasks like any good co-worker. Sierra, a startup founded by former Salesforce co-CEO Bret Taylor and Google vet Clay Bavor, sees agents as customer experience tools, helping people achieve actions that go well beyond the chatbots of yesteryear to help solve more complex sets of problems.

This lack of a cohesive definition does leave room for confusion over exactly what these things are going to do, but regardless of how they’re defined, the agents are for helping complete tasks in an automated way with as little human interaction as possible.

Rudina Seseri, founder and managing partner at Glasswing Ventures, says it’s early days and that could account for the lack of agreement. “There is no single definition of what an ‘AI agent’ is. However, the most frequent view is that an agent is an intelligent software system designed to perceive its environment, reason about it, make decisions, and take actions to achieve specific objectives autonomously,” Seseri told TechCrunch.

Can ChatGPT Replace Doctors? Generative AIs Provide New Tool in Dermatology

The Society for Pediatric Dermatology Annual Meeting, held in Toronto, Ontario, Canada from July 11 to July 14, 2024, featured a session focused on numerous ways that ChatGPT and other generative artificial intelligence (AI) systems can help dermatologists conduct their practices. Albert C. Yan, MD, FAAP, FAAD, a pediatric dermatologist at the Children’s Hospital of Philadelphia, gave a presentation to demonstrate how doctors can use generative pre-trained transformers (GPTs) to streamline processes, as well as words of caution and best practices for using AI in practice.

GPTs, Yan explained, are AI systems that generate content, which includes text, images, audio, and video, that are based on multimodal input training. These include ChatGPT and Google’s Gemini, among other GPTs. The prompt bar is the method in which individuals can interact with these GPTs, and there’s a method to getting what you want out of the GPT.

“One of these [high-yield tips] is not just telling the AI—ChatGPT or Gemini—what you want, but also telling it what you don’t want,” Yan said. These AI generators can come up with very long answers to more general questions, so making sure to specify what you want out of the generative AI is important to ensure that you get the information needed. For example, Yan said, if you want information about pustular eruptions, you can specify those in the neonatal period that are common. Asking the AI for a step-by-step process can also help in improving accuracy, and adding “be concise” to your prompt can help get a shorter answer that’s far more concise.

In health care, Yan said that AI scribes are a new innovation that can be used by doctors. Scribes traditionally are an assistant to the doctor and help them to document patient notes in their electronic health record, but the process can be simplified using these new tools.

“There was this 1 study done…that looked at ambient scribes for clinicians,” Yan said. “And in essence, it saved the users about an hour to an hour and a half per day of scribe typing time with their electronic medical record. The more you use it, the better it helps you reduce your time in the charts.”

Op-ed: How well can AI chatbots mimic doctors in a treatment setting?

To secure a medical license in the United States, aspiring doctors must successfully navigate three stages of the U.S. Medical Licensing Examination, with the third and final installment widely regarded as the most challenging. It requires candidates to answer about 60% of the questions correctly and, historically, the average passing score hovered around 75%.

When we subjected the major large language models to the same Step 3 examination, their performance was markedly superior, achieving scores that significantly outpaced many doctors. But there were some clear differences between the models.

Here’s how they scored:

ChatGPT-4o (OpenAI) — 49/50 questions correct (98%)

Claude 3.5 (Anthropic) — 45/50 (90%)

Gemini Advanced (Google) — 43/50 (86%)

Grok (xAI) — 42/50 (84%)

HuggingChat (Llama) — 33/50 (66%)

OpenAI unveils cheaper small AI model GPT-4o mini | Reuters

ChatGPT maker OpenAI said on Thursday it was launching GPT-4o mini, a cost-efficient small AI model, aimed at making its technology more affordable and less energy-intensive, allowing the startup to target a broader pool of customers.

Microsoft-backed (MSFT.O), opens new tab OpenAI, the market leader in the AI software space, has been working to make it cheaper and faster for developers to build applications based on its model, at a time when deep-pocketed rivals such as Meta (META.O), opens new tab and Google (GOOGL.O), opens new tab rush to grab a bigger share in the market.

The GPT-4o mini model's score compared with 77.9% for Google's Gemini Flash and 73.8% for Anthropic's Claude Haiku, according to OpenAI.

Smaller language models require less computational power to run, making them a more affordable option for companies with limited resources looking to deploy generative AI in their operations.

With the mini model currently supporting text and vision in the application programming interface, OpenAI said support for text, image, video and audio inputs and outputs would be made available in the future.

OpenAI's new 'Project Strawberry' could give ChatGPT more freedom to search the web and solve complex problems

OpenAI is constantly working on new models with several teams exploring different approaches to achieving the long-term goal of artificial general intelligence (AGI), and some of those ideas are working out better than others.

According to Reuters, there is a new project code-named "Strawberry" that some on X predict could be a new version of the infamous "reasoning mode" Q* revealed through a leak last year. Project Strawberry seems to be a new model or system capable of improved reasoning, including going online to find information in preparation for solving a particularly complex problem.

The Reuters report, based on internal documentation and comments from an insider suggests this could be a significant upgrade in artificial intelligence capabilities. According to the contact, Strawberry is even a "tightly kept secret" inside OpenAI but it seems, that while it might be part of ChatGPT in the future its main purpose is to perform "deep research" beyond simple user queries. Some of the capabilities include being able to plan enough when responding to navigate the internet autonomously and reliably without having to be told to do so by the user.

Why Americans Believe That Generative AI Such As ChatGPT Has Consciousness

Generative AI, as embodied by OpenAI’s work on ChatGPT, has progressed by leaps and bounds in recent years. The company and its rivals often talk about a vision for artificial general intelligence (AGI) with human-like intelligence. OpenAI even has a new scale to measure how close their models are to achieving AGI. But, even the most optimistic experts don’t suggest that AGI systems will be self-aware or capable of true emotions. Still, of the 300 people participating in the study, 67% said they believed ChatGPT could reason, feel, and be aware of its existence in some way.

There was also a notable correlation between how often someone uses AI tools and how likely they are to perceive consciousness within them. That’s a testament to how good ChatGPT is at mimicking humans, but it doesn’t mean the AI has awakened. The conversational approach of ChatGPT likely makes them seem more human even, though no AI model works like a human brain at all. And while OpenAI is working on an AI model capable of doing research autonomously called Strawberry, that’s still different from an AI that is aware of what it is doing and why.

The belief in AI consciousness could have major implications for how people interact with AI tools. On the positive side, it encourages manners and makes it easier to trust what the tools do, which could make them easier to integrate into daily life. But trust comes with risk, from overreliance on them for decision-making to, at the extreme end, emotional dependence on AI and fewer human interactions.

The researchers plan to look deeper into the specific factors making people think that AI has consciousness and what that means on an individual and societal level. It will also include long-term looks at how those attitudes change over time and with regard to cultural background. Understanding public perceptions of AI consciousness is crucial not only to developing AI products but also to the regulations and rules governing their use.

“Alongside emotions, consciousness is related to intellectual abilities that are essential for moral responsibility: the capacity to formulate plans, act intentionally, and have self-control are tenets of our ethical and legal systems,” Colombatto said. “These public attitudes should thus be a key consideration in designing and regulating AI for safe use, alongside expert consensus.”

Meta won’t release its multimodal Llama AI model in the EU - The Verge

Meta says it won’t be launching its upcoming multimodal AI model — capable of handling video, audio, images, and text — in the European Union, citing regulatory concerns. The decision will prevent European companies from using the multimodal model, despite it being released under an open license.

“We will release a multimodal Llama model over the coming months, but not in the EU due to the unpredictable nature of the European regulatory environment,” Meta spokesperson Kate McLaughlin said to The Verge.

Just last week, the EU finalized compliance deadlines for AI companies under its strict new AI Act. Tech companies operating in the EU will generally have until August 2026 to comply with rules around copyright, transparency, and AI uses like predictive policing.

Meta’s decision follows a similar move by Apple, which recently said it would likely exclude the EU from its Apple Intelligence rollout due to concerns surrounding the Digital Markets Act. Meta has also halted plans to release its AI assistant in the EU and paused its generative AI tools in Brazil — both due to concerns raised about data protection compliance.

China's AI progress stalls due to government censorship

China's government-led push to outpace the U.S. in generative AI is hitting speed bumps created by the Chinese government's need to control political speech, according to new reports. Even as the U.S. tries to restrict China's access to high-end chips and hardware sold by its allies, the demands of China's authoritarian system could prove more decisive in tipping the global AI race America's way.

Driving the news: Several stories in the Western press this week detailed the drag on China's AI progress created by the country's chief regulator, the Cyberspace Administration of China (CAC).

The Financial Times reported Wednesday that the CAC is requiring elaborate reviews of the AI models developed by China's tech giants and startups — including ByteDance, Alibaba, Moonshot and 01.AI.

"China's operational guidance to AI companies published in February says AI groups need to collect thousands of sensitive keywords and questions that violate 'core socialist values', such as 'inciting the subversion of state power' or 'undermining national unity,'" the Financial Times notes.

Per the Wall Street Journal, "The internet regulator requires companies to prepare between 20,000 and 70,000 questions designed to test whether the models produce safe answers, according to people familiar with the matter. Companies must also submit a data set of 5,000 to 10,000 questions that the model will decline to answer, roughly half of which relate to political ideology and criticism of the Communist Party."

Case in point: China's chatbots — like its search engines and social media spaces — can't talk about the Tiananmen Square uprising of 1989 or question the legitimacy or policies of President Xi Jinping.

The bots are designed to refuse to answer politically controversial queries.

If users ask too many such questions in a row, the systems must end the conversation.

Between the lines: China's AI firms arrived late to the generative AI game to begin with, and having to toe the government's political line is making it harder for them to catch up.

Training their models is slower when they have to scour data to remove politically sensitive information.

And no matter how much effort they put into sanitizing their bots' output, the random and unpredictable nature of generative AI means the government can never be 100% certain they won't stray into sedition.

Meanwhile, all the development time and computing resources companies put into building these ideological guardrails isn't available for making the AIs faster and more useful.