The Roadmap to Implementing Generative AI

Overcoming Fear, Uncertainty and Doubt to Build Generative AI Solutions

Where are firms in terms of implementing generative AI?

There has been a lot of talk and hype about generative AI since ChatGPT 3.5 came out a year ago in November of 2023 and these tools continue to be in the news. Microsoft, Google, Meta, Amazon and others continue to pour money into creating new offerings or integrating its capabilities into existing applications. Some, like Microsoft Copilot, are coming out as this is written.

In fact, Salesforce’s State of IT report found 86% of IT leaders think generative will play a big role in their firms and 57% believe it’s a “game changer.”

However, almost all (99%) feel they need to put guardrails in place to use generative AI responsibly, and most believe their company isn’t ready to deploy it yet (65%).

Here’s why.

Concern over security threats (71%).

Lack of employee skills (66%).

Inability to integrate the technology (60%).

Lack of a unified data strategy (59%).

In sum, there is a combination of FUD factors (fear, uncertainty and doubt) that is moderating the hype and slowing the rapid implementation of generative AI at the enterprise level.

The FUD Factor

In addition to the factors brought out in the Salesforce survey, there are a host of concerns organizations have about generative AI applications. These include

Legal risks: There are two questions here. One, are those whose data was scraped to make the Large Language Models owed anything for their input and two, who owns the output of generative AI?

Bias & Hallucinations: Although it is getting better at minimizing hallucinations, the production of false information is a problem when users expect accuracy from computer output. Also, bias in the data and the RLHF (reinforcement learning, human feedback) process raise ethical concerns.

Deep Fakes: The creation of “fake news” via generative AI tools is another issue that gives these applications a bad image.

Employee Fear: At the same time employees need to be upskilled to use Gen AI tools, those same employees are worried that these apps will take their jobs. This is unsettling for many in organizations.

Use Cases: Leaders know they have to implement generative AI but they are unsure where to apply it first - which use cases will have the most impact with the least cost. This is made harder because…

The Technology is Moving Fast: It’s impossible to keep up with all the new tools in the Gen AI space and which to bet on. Personally, I feel like Sisyphus, who was condemned by the gods to roll a rock up a hill all day only to have it roll back down again at sunset. It’s the same with Gen AI - for a brief moment I feel caught up after reading all the news but in the morning my inbox is filled with new news about the technology.

Costs: The costs for these tools at an enterprise level is not cheap. It appears Microsoft’s Copilot will significantly increase the cost of Office 365, especially when you multiply the number of your users X the cost X 12 months.

Partner Choice: Tied to betting on the right technology is betting on the right partner. Organizations need to take a gamble on who has the best Gen AI roadmap at the same time the technology is technically and legally in flux.

The Promise of Generative AI

All that said, forward-thinking leaders realize the potential for Gen AI is huge in terms of productivity, creativity and knowledge-access. Organizations are implementing it at the enterprise level in many customer-facing functions such as marketing, sales, R&D, and customer service as well as using it to improve internal operations. This is in addition to individuals using it to improve their impact on the organization.

These leaders know there is not a choice between risk and reward. Instead, they understand the need to move forward to reap the benefits while managing the downside.

A Way Forward

Below is the three-step approach I’ve built to move an organization from uncertainty about generative AI to implementation. It is partially built from lessons garnered on our approach in my organization (UNC-Chapel Hill) with some additional thoughts added.

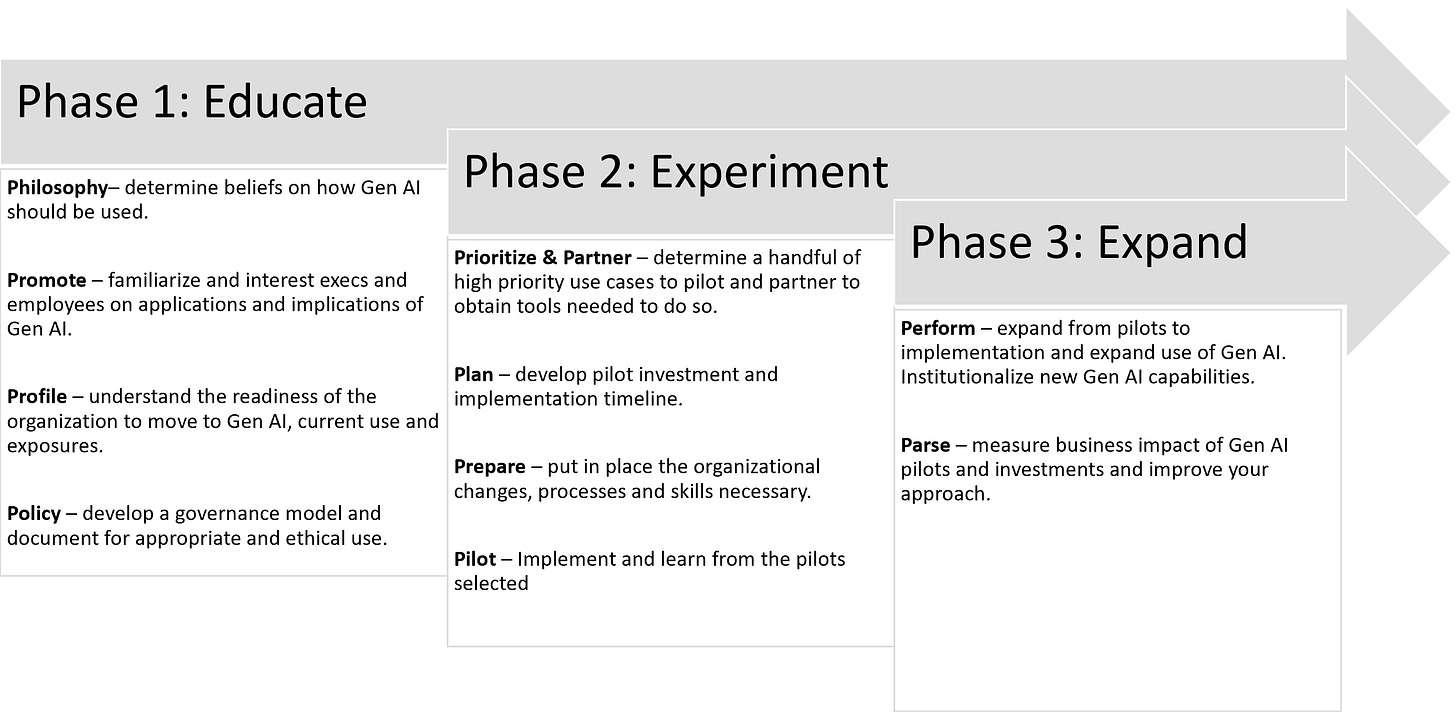

There are three phases: Educate, Experiment and Expand.

Educate

Activities in this arena need to be worked on in parallel because a) it needs to move relatively quickly and b) often work in one area informs work in another (e.g., work on policy will be part of promote activities and be built around the generative AI philosophy implemented).

Philosophy: Determining a philosophy about generative AI is essential as it will help guide the organization’s education, strategy and implementation. A philosophy that worked for us is that “Generative AI should help you think. Not think for you.” We want our faculty, staff and students to apply Gen AI to improve their thought processes, not just replace them.

Promotion: This is twofold; a big part of it is educating the organization on what Gen AI is, what they can do with it, how it will change the business and the overall philosophy and policy. The other part is evangelizing - getting people excited about the possibilities while also sharing with them the risks.

Profiling: This deals with determining the organization’s understanding of and readiness for implementing generative AI. This can be done via surveys of individuals as well as organizational leaders to get a sense of their ability and willingness to adopt generative AI applications.

Policy is about the Do’s and Don'ts of using Gen AI, what is and isn’t proper use of these tools. This will require a lot of cross-functional discussion since different organizations will have different uses and perspectives. However, it needs be done quickly and then communicated so the community knows the rules.

Experiment

Prioritizing is focused on creating and prioritizing use cases that come from within the organization. You’ll need to work with the subunits in concert to gather the use cases, then prioritize those that have a big impact but are easier lifts. This will raise the probability the first attempts at implementation are successful.

Partner: Use case development and prioritization go hand-in-hand. Knowing the use cases will drive which partnerships you will make. Making deals with outside vendors that have the Gen AI capabilities you need and whose attention you can gain will determine success or failure.

Plan: Once you have use cases and technology partners you can begin to develop a set of pilot cases and an investment and implementation timeline. Again, focusing on low-hanging Gen AI fruit will shorten the timeline and reduce the investment required.

Prepare: All of the above is happening in parallel, as is this action. The overall organization will continue to need to be educated and encouraged to use generative AI tools, especially those that are integrated into apps they’re already using. Units that are working on pilots will require additional attention and resources to prepare them. Lastly, there may need to be some overall enterprise actions that need to occur, such as creating a centralized unit that will enable Gen AI implementation across the organization, hiring of talent with these skills, and buying or accessing the computing power to run these apps.

Pilot: As the above actions come to fruition, pilots can be implemented and learnings documented and shared. Progress on these pilots must be monitored weekly to ensure they are kept on the path to success.

Expand

Perform is the step that can now occur as pilots come to fruition, learnings are absorbed and the organization becomes more Gen AI capable. New pilots can be implemented for the next wave of use cases, expanding the use of generative AI throughout the business.

Parse or measure, is all about putting in place the metrics that determine the effectiveness of the generative AI applications in improving the business as well as checking on how well the implementation process is proceeding so it can be improved.

Approach Summary

Of course, each phase is not as distinct from the others but in general, these are the stages an organization should plan for in order to progress quickly and confidently towards implementing generative AI solutions. If that is the path you are on, I hope this process I’ve outlined offer some guidance and structure to help you and your team move forward.

“Inspired” vs. “Created”

One of the things I’ve been mulling over is how much someone who is doing the prompting deserves credit for what generative AI produces. For example, if I execute a prompt that produces an image like the one below using DALLE, have I “created” it in the same sense it would be if I drew or painted it?

This was a question I brought up on a UNC Carolina Public Humanities AI panel. From the conversation, I think one of my panelists hit the right note on this when he used the term “inspired,” instead of “created” as the best way to describe how credit should be given to the prompter. The prompter inspires what is generated but generative AI actually creates it. At least that wording makes the most sense to me.

Great post. I disagree with your last statement, where you say that AI actually creates it. It seems that this only applies to an aborted process where we would surrender to what generative AI produces in response to the prompt. The way it actually works in my experience is something like: you prompt, AI generates, you evaluate, sense, re-prompt, AI adjusts, and so on. The final content is totally yours. This discussion point is like asking if a painter is creating or if it's their hand that is creating. It's the painter. I suspect this discussion topic will soon fade away as we get better at using AI as a tool.